The Long Freight

The building that housed The Quarterly Instantiation had, in a previous life, been a recording studio, and before that a print shop, and before that a stable. This was appropriate. Each tenant had worked in a medium that the next tenant’s medium would render economically irrelevant, and each had been convinced that their medium was the one that would last forever.

The stable, in fairness, had a reasonable claim. Horses had been the dominant medium of transport for roughly four thousand years. Print had managed about five hundred. Audio recording made it to a hundred and thirty. Software-as-content was currently on year eleven and already showing the kind of structural cracks that usually presage either a renaissance or a total collapse, which in creative industries are frequently the same thing.

Nora O’Shea was the publication’s chief critic (or, as her business card read, Senior Computational Experience Reviewer), because nobody in the software-content industry could agree on what to call anything, a problem that had persisted since the earliest days when people argued about whether they were making “interactive essays,” “executable arguments,”, “explorable explanations”, “software-native longform,” or just “things.”

She was forty-three, which made her ancient in the field, and she had been reviewing software-content for nine years, which made her one of maybe two hundred people on Earth qualified to have an opinion about whether a piece of interactive work was any good. This was not because the job required rare intelligence. It was because the job required the specific combination of aesthetic taste, technical literacy, and iron-stomached patience needed to sit through seven hundred new releases a month and determine which ones were worth the audience’s time and which ones would merely waste it, crash their devices, or (in one memorable case involving a piece about the history of anesthesia) literally put them to sleep.

The anesthesia piece had been intended as a metaphor. The creator, whose background was in pharmacology, had embedded a biofeedback loop that gradually slowed the pacing of the experience as the user’s attention wandered, simulating the creeping unconsciousness of general anesthesia. It worked far better than intended. Three users had to be woken up by family members. The piece won an award, which tells you everything you need to know about the software-content awards circuit.

Today’s queue included a climate simulation that let you run different policy scenarios and watch them play out over three centuries (”technically impressive, emotionally manipulative. It weights nuclear outcomes about 40% heavier than the IPCC models justify, which is the computational equivalent of sad violin music.”), a memoir about immigration rendered as a maze you could only solve by learning phrases in four languages (”beautiful but the Mandarin module has a tone recognition bug that makes it nearly impossible for non-native speakers, which rather undermines the thesis about empathy”), and the one she’d been putting off all morning: the latest from Jasper Hollande.

Jasper Hollande was, depending on who you asked, either the most important software-content creator alive or the most insufferable. Nora suspected both assessments were correct. He had started as a traditional filmmaker, pivoted to video essays during what old-timers now called the “YouTube Interregnum,” and then, about six years ago, had discovered that he could express arguments in software that he’d been trying to express in video for his entire career.

The period roughly between 2005 and 2025 when video was the dominant medium for communicating ideas to a public audience. It was called an “interregnum” because, in hindsight, it was a transitional phase between the age of text (which had dominated since Gutenberg) and the age of executable media (which was, depending on your perspective, either liberating humanity’s intellectual potential or destroying its attention span). The video essayists who survived the transition were generally the ones who had always been frustrated by video’s limitations such as the inability to let the audience test an argument, not just watch it be presented. Those who loved video as video mostly stayed in video, which continued to exist in the same way that radio continued to exist after television: technically still there, with a small and passionate audience, and constantly described as “having a moment” by journalists who needed something to write about.

His breakout piece had been The Gentrification Machine, an interactive exploration of urban housing policy that let you play as a city council member making zoning decisions and watching, in real-time, as your choices rippled through demographics, property values, school quality, and political viability over simulated decades. It had been experienced by eleven million people, which was a staggering number for software-content at the time, and it had influenced the actual housing debate in at least three cities, which was either a triumph of democratic engagement or a terrifying demonstration of what happens when a single creator’s model of reality gets mistaken for reality itself.

This was, Nora reflected, not actually a new problem. Plenty of novels had reshaped American politics through the radical technology of “making people feel things by reading words on paper.” The difference was that a novel is transparently a construction. The reader knows they’re being shown a curated version of reality. Software-content was sneakier. A simulation feels like a neutral engine for exploring consequences, even when every parameter, every weight, every feedback loop has been chosen by a creator with an argument to make. The most dangerous software-content was the kind that looked the most objective.

His new piece was called The Long Freight, and it was about containerization.

Nora opened it.

The first thing she noticed was that it ran in Meridian, which was unusual. Most creators published through Loom or Vellum or one of the other major software-content platforms. Meridian was smaller, more artisanal. It attracted the kind of creators who had opinions about runtime environments the way wine people had opinions about terroir. Hollande publishing on Meridian was like a major film director releasing through A24 instead of a studio. It was a statement.

The platform wars of software-content had followed the exact same trajectory as the platform wars of video, which had followed the exact same trajectory as the platform wars of social media, which had followed the exact same trajectory as the format wars of home video, which had followed the exact same trajectory of literally every media technology ever devised by human beings. The pattern was: (1) new medium emerges, (2) several platforms compete, (3) one or two become dominant, (4) the dominant platforms gradually enshittify, (5) creators revolt and move to newer, smaller platforms, (6) the newer, smaller platforms get big enough to begin enshittifying, (7) repeat forever. The only variable was the cycle time, which was getting shorter. VHS vs. Betamax took a decade. YouTube vs. everyone took five years. Loom vs. Vellum vs. Meridian had been churning through phases every eighteen months. Nobody had figured out how to break the cycle, and Nora privately suspected nobody ever would.

The second thing she noticed was the language declaration.

Every piece of software-content, when you looked at its manifest, listed the programming languages it was built in. This was a vestigial piece of metadata from the era when language choice mattered. When a piece being written in Python or Rust or JavaScript was meaningful information about its performance characteristics, its security model, and the creator’s philosophical commitments.

There had been a time (and Nora was old enough to remember it, barely) when people had identified with their programming language. “I’m a Ruby developer” was not a description of a skill. It was a declaration of tribal allegiance, an aesthetic philosophy, an entire worldview. Ruby people valued elegance and expressiveness. Java people valued reliability and enterprise acceptance. Haskell people valued being right about everything while building nothing anyone used. JavaScript people valued... well, nobody was entirely sure what JavaScript people valued, including JavaScript people, but they were very enthusiastic about it. C programmers looked down on everyone. Lisp programmers had transcended looking down on people and existed in a state of serene, parenthetical enlightenment.

The Long Freight was built in nine languages.

Hollande hadn’t actually written it in nine different languages. He’d written a specification (a behavioral description of what the experience should do, how it should feel, what the user should be able to explore and discover) and his build system had generated implementations in nine different languages simultaneously, selected the one that performed best for each component, and stitched them together into a seamless whole.

This had killed the language wars more effectively than any amount of arguing ever could. It turned out that when the choice of language was made by an optimization engine rather than a human programmer, the “right” answer was always “whatever runs best for this specific thing,” which was a different language for different components of the same system. A piece of software-content might have its data processing layer in Rust, its UI in something descended from JavaScript, its simulation engine in Julia, and its accessibility layer in Python, and nobody cared, because nobody had to read or maintain any of it. The code was generated, tested, and discarded. The specification was the artifact that mattered. Languages had become like film stock. A technical substrate that affected the final product in ways only specialists could detect and nobody else cared about. The few people who still wrote code by hand in a specific language were regarded with the same fond, slightly bewildered respect given to people who shoot photographs on large-format film: clearly talented, possibly brilliant, definitely engaged in an act of willful anachronism.

The language declaration in a manifest was now approximately as meaningful as the “shot on 35mm” credit at the end of a movie. It told you something, but what it told you was about the creator’s process, not about the experience you were about to have. Nora glanced at it the way she’d glance at a wine’s alcohol percentage.

She pressed “Enter”.

The Long Freight began with a dock.

Not a glamorous dock. A cold, wet, ugly dock in 1955, rendered in a visual style that split the difference between photography and memory. Nora could hear the clank of crane rigging and the shouts of men doing hard work in a hard place.

She was standing on a pier, watching longshoremen unload a cargo ship by hand. The piece let her feel the weight of it. Each crate took time. The animation was deliberately slow. A tooltip appeared: This ship will take six days to unload. In 1955, unloading a ship cost $5.86 per ton, and most of that cost was human hands moving physical objects from one place to another.

She could reach into the scene and pick up a crate. It was heavy, in the way that software-content could make things feel heavy: it moved slowly, it required sustained input, the interface resisted. She put it down.

The piece then did something that Nora recognized as quintessential Hollande: it made you complicit in the next step. A slider appeared. It was labeled Unloading cost per ton, and it was set to $5.86. Below it, a simulated economy. Simplified but not simple, with manufacturing nodes, retail nodes, consumer nodes, trade routes, and a clock.

Drag the slider, the piece suggested. See what happens when shipping is so cheap that the cost of moving a physical object across the planet approaches zero.

She dragged it. The piece jumped forward. The dock changed. Containers appeared. Massive steel boxes, uniform, stackable. The cranes changed. The ships changed. The men... vanished. Not dramatically. They just weren’t there anymore. The same dock, transformed, emptied of the human labor that had defined it.

The simulation bloomed. Trade routes multiplied. Factories appeared in countries that hadn’t had them before. Retail formats mutated. She watched as something recognizable as IKEA emerged from the combinatorial logic of cheap Scandinavian design plus cheap Polish manufacturing plus essentially-free global shipping. She watched container ships get bigger, then bigger again, then impossibly, comically big, and she watched the ports grow to meet them, and the rail networks, and the trucking fleets, and the warehouses, and the logistics companies, and the customs brokerages, and the standards bodies, and the tracking systems, and the jobs, the hundreds of thousands of jobs that hadn’t existed before and couldn’t have been predicted from the starting conditions.

Over 500,000 jobs at the Port of New York and New Jersey appeared as a growing constellation of light. The 35,000 longshoremen who had once worked the Brooklyn waterfront appeared as a smaller, dimmer constellation that faded but didn’t disappear. Some of them had become the new things, and some of their children had, and some of the new things existed because the old things had created the community and infrastructure and knowledge that the new things needed to grow.

Then the slider label changed. It no longer said Shipping cost per ton. It said Software development cost per unit of functionality. And it was already most of the way to zero.

In one corner of the simulation (and Nora had almost missed it, which she suspected was intentional) there was a small cluster of nodes labeled Anticipatory Descriptions. She selected it. It expanded into a handful of sub-nodes: writers, essayists, a few academics, who had tried to describe the coming transformation using the only tools available to them at the time. Plain text. Static arguments. Metaphors and predictions, some wildly wrong about the specifics, most right about the shape. One node read: Before the tools existed, some people tried to build the map in prose. They couldn’t make the simulation. They couldn’t let you drag the slider. They wrote stories about people dragging sliders, and hoped that was close enough. Nora smiled at this. It was a small, self-aware joke (Hollande acknowledging the long chain of people who had tried to do what he was doing, in media that couldn’t quite support the weight of the argument). The node was tiny. Most users would never find it. But it was there, a quiet nod to the people who had invested their time in an older medium to say “I think something is coming, and this is the best I can do to show you.”

Now drag it the rest of the way, the piece said. And see what’s coming.

Nora leaned back. She was maybe twenty minutes into the experience and she already knew two things: that it was the best piece of software-content released this year, and that she had no idea how to review it.

This was the critic’s fundamental problem in a medium where the object of criticism was not fixed. A film was a film. You watched it, you had an experience, you wrote about the experience. If you watched it again, you had essentially the same experience, because the film hadn’t changed. A novel didn’t rewrite itself between readings. A painting didn’t rearrange its composition based on who was looking at it.

Software-content did all of these things. The Long Freight was different for every user, because every user dragged the slider to different points at different speeds and explored different branches of the simulation and asked different questions and therefore had a different experience. What Nora was reviewing was the possibility space of experiences. She was reviewing the range of things the piece could be.

<Nora opens a chat>

NORA: Question… the IKEA emergence. Feels too clean. Am I being paranoid?

JULIAN: Not paranoid. There’s a constraint test that biases toward flat-pack + distributed manufacturing once shipping friction drops below a threshold.

NORA: so… a magic trick.

JULIAN: Yep. Not unsafe. Just potentially misleading.

NORA: all art persuades…

JULIAN: Yeah, but this persuades while pretending it’s neutral

She made a note: The containerization section is brilliant but beware of the IKEA emergence. It feels like a magic trick because H. has tuned the simulation parameters to make IKEA-like structures emerge “naturally,” but this is authorial sleight of hand, not economic inevitability. The simulation doesn’t show the 50 other business models that tried the same thing and failed. Worth noting in review.

Then she made another note: The transition from shipping to software is the hinge. If it doesn’t hold, the whole piece collapses. Need to sit with it.

Then she poured herself more coffee and re-entered the piece, because the second half was where Hollande was making his real argument, and she needed to see all of it before she could write a word.

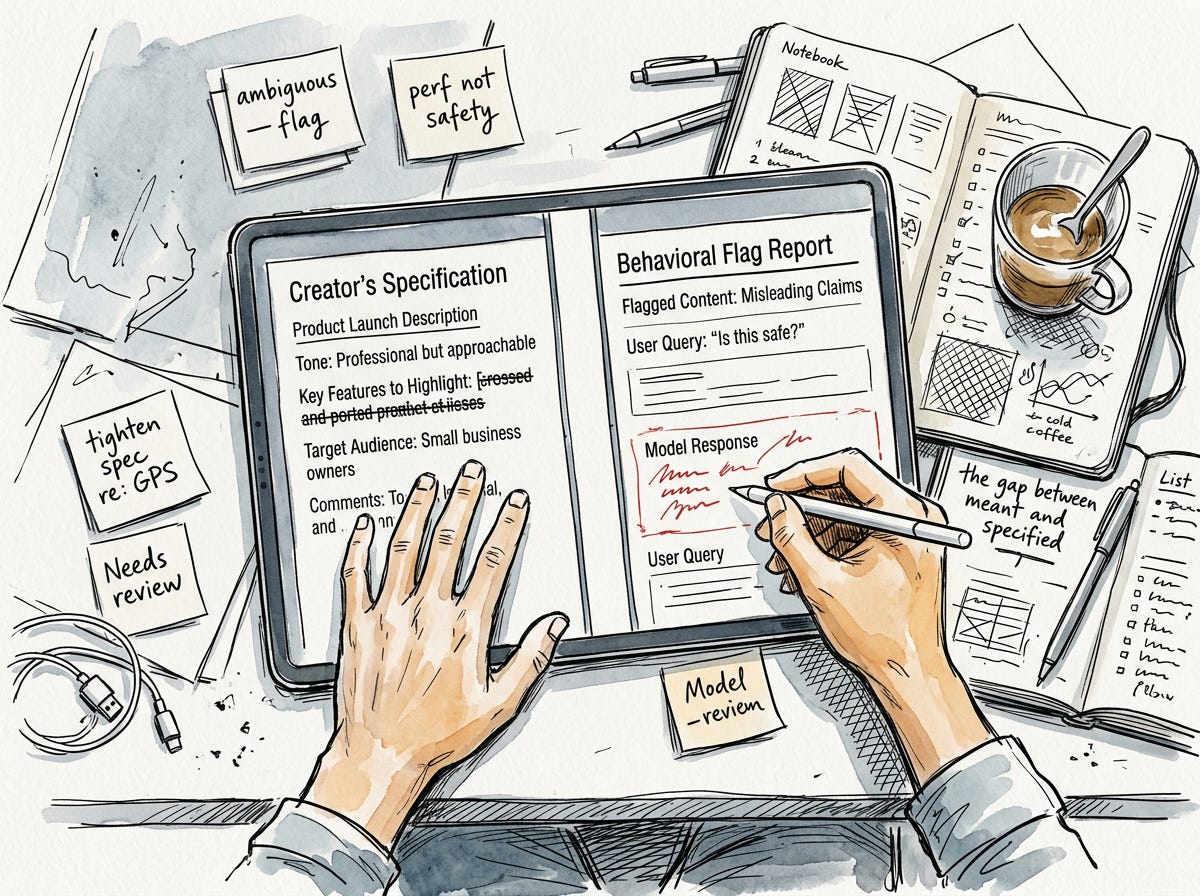

Three floors up, in what had been the recording studio’s drum booth and was now a server closet, a twenty-four-year-old named Julian Miller was doing the job that kept The Quarterly Instantiation running: he was reviewing code that nobody had written.

Julian’s title was Safety Reviewer, which sounded like he worked at a chemical plant. His actual job was to examine pieces of software-content submitted to the publication’s “Try This” section (a curated selection of new releases that readers could run directly from the publication’s sandbox) and determine whether they were safe to execute.

The sandbox was the critical innovation. In the early days of software-content, audiences had to download and run pieces on their own hardware, which was roughly equivalent to asking someone to eat a home-cooked meal prepared by a stranger in an uninspected kitchen. Sometimes it was wonderful. Sometimes it gave you food poisoning. Occasionally it burned your house down. The platforms had solved this by running everything in sandboxed environments — sealed containers within which software-content could execute without access to the user’s actual system. This had been the “YouTube moment” for software-content: the moment it became safe enough for a mass audience. Before sandboxed platforms, software-content was a niche pursuit for technically literate early adopters. After sandboxes, anyone could experience it, the same way YouTube had made anyone capable of watching video without understanding codecs or container formats or any of the plumbing that made video distribution work.

This was harder than it sounded. A piece of software-content was not like a video that either played or didn’t. It was a living system with behavior that processed user input, maintained state, made decisions, generated output. A piece about financial literacy might ask for your actual spending habits to personalize its lessons. A piece about health might request biometric data from your watch. A piece about privacy might need access to your browsing history to make its point about surveillance.

Julian’s job was to figure out which pieces were doing what they claimed to be doing and nothing more, and which ones were (intentionally or accidentally) doing something they shouldn’t.

Most of the bad actors were accidental. A creator building a piece about sleep science would request microphone access to use ambient sound as a biofeedback input, not realizing that their build system had generated a module that also recorded and cached audio locally, creating a privacy violation the creator never intended. This happened because the creator had specified “use ambient sound data” and the AI that generated the code had interpreted “use” more broadly than “analyze in real-time and immediately discard.” The spec was ambiguous. The generated code was technically correct. The behavior was wrong.

This was, Julian had come to believe, the central problem of the entire medium. Software-content was generated from specifications written in natural language such as English, mostly, though he’d reviewed specs in Mandarin, Spanish, Arabic, and once, memorably, Latin. The specifications said what the creator wanted. The generated code did what the specification said. But natural language is ambiguous in ways that code is not, and the gap between what the creator meant and what the code did was where every interesting problem lived. The entire profession of Safety Reviewer existed in that gap. They were translators, in a sense. Between intention and execution, between the human meaning of words and the mechanical meaning of the code those words had conjured into existence.

Today’s queue included a piece about bird migration that wanted GPS access (”legitimate... it shows you migratory routes relative to your location, but the location data is being cached unnecessarily, flag for the creator to tighten the spec”), a satire about corporate culture that spawned an unreasonable number of background processes (”it’s generating fake emails as part of the joke, but each email spawns its own thread, and on older devices this crashes the experience. performance issue, not safety, but still needs fixing”), and a piece about the history of encryption that, with deeply appropriate irony, had a security vulnerability in its own implementation.

Julian fixed none of these things himself. This was the part of the job that people found surprising. He didn’t write code. He wrote reports. He identified the gap between the creator’s specification and the generated code’s behavior, described the gap precisely, and sent the report back to the creator, who would then tighten their specification and regenerate.

In rare cases, the gap was too subtle for a spec revision to fix, and the creator would call in a Fidelity Specialist. Someone who could write tests so precise that the generated code had no room to misbehave. Fidelity Specialists were the most expensive freelancers in the industry, because their job required understanding both the creator’s intent (which was artistic) and the code’s behavior (which was mechanical) and writing tests that bridged the two (which was both). There were maybe five hundred good ones in the world, and they charged accordingly.

The Fidelity Specialists had emerged from an older profession: QA engineers. When software was written by hand, QA engineers tested it for bugs. When software was generated from specs, the bugs changed character. The old bugs were implementation errors. The developer meant X and accidentally wrote Y. The new bugs were specification ambiguities like the creator meant X, wrote “X” in English, the generator interpreted “X” as something subtly different from X, and the resulting behavior was wrong in a way that was technically correct. Testing for specification ambiguity was harder than testing for implementation errors, because you couldn’t just check whether the code matched the spec. The code always matched the spec. The question was whether the spec matched the intent, and intent lived in the soft, ambiguous, context-dependent world of human meaning. It was, Julian thought, deeply fitting that as programming had become automated, the hardest remaining problem was understanding what people meant when they used words. The machines could build anything. The challenge was knowing what to ask for.

Julian flagged the encryption piece, wrote up the bird migration report, and moved on. His queue never got shorter. This was because the volume of software-content being produced was growing exponentially while the number of qualified Safety Reviewers was growing linearly, which was a fancy way of saying there were a lot more people making things than there were people making sure the things were safe. The platforms handled the bulk of it with automated scanning, but automated scanning caught only the obvious problems — the malware, the data exfiltration, the resource abuse. The subtle problems like the ambiguous specs, the unintended behaviors, the experiences that were technically safe but functionally misleading, those required a human who could read both code and intent.

Julian had come to this job from an unusual direction. He’d started as a creator. He’d made a few small pieces about urban planning that had gotten modest attention and he’d discovered that he was better at understanding other people’s work than at making his own. This was a talent, and in the software-content ecosystem, it was a talent in desperately short supply.

Every creative industry eventually discovers that the infrastructure of criticism, curation, and quality assurance is as important as the creative work itself. Hollywood has producers, editors, studio executives, test audiences, the MPAA, critics, and a vast apparatus for deciding which films get made, how they’re shaped, and how they reach audiences. Publishing has agents, editors, publishers, reviewers, and booksellers. Music has A&R, producers, sound engineers, DJs, and critics. Each of these roles exists because the creative work alone is not enough — it needs to be found, shaped, verified, distributed, and placed in context. Software-content was still in the early phase where creators were lionized and everyone else was invisible, but Julian suspected this would change. The creators needed him more than they knew. They just hadn’t figured that out yet, because the industry was still young enough to believe the romantic myth that creation was the only thing that mattered.

The Long Freight, Nora had to admit, stuck its landing.

The second half of the piece was structured as a series of “what breaks?” explorations. You’d see an effect of near-zero software costs (everyone builds custom tools, software becomes disposable, non-technical people become creators) and then the piece would let you push that effect forward in time and watch the problems emerge. Integration nightmares. Trust crises. Cognitive overload. Shadow software. The apprenticeship paradox.

Hollande’s thesis (and it was a thesis, not an observation, though the interactive format made it easy to mistake for one) was that these problems all shared a root. Every broken integration, every trust violation, every piece of shadow software quietly rotting on some department’s workflow, could be traced back to the same gap: the distance between what someone meant and what they specified. The Safety Reviewers existed because specs were ambiguous about data handling. The Fidelity Specialists existed because specs couldn’t capture behavioral nuance. The platform wars existed because specs couldn’t express “make it work everywhere” without generating twelve conflicting implementations of “everywhere.” Even the curation crisis (too much software-content, not enough of it good) was in Hollande’s framing, a specification problem: anyone could describe what they wanted to build, but describing it well enough to produce something worth experiencing required a craft that most people hadn’t developed, in the same way that the invention of the word processor had made everyone capable of writing a novel without making everyone capable of writing a good novel.

It was an elegant argument. Nora wasn’t entirely sure it was a correct one. Some of the problems the piece catalogued seemed to have less to do with specification ambiguity and more to do with ordinary human messiness, politics, ego, market incentives, the fact that people will use any creative tool to produce things that are simultaneously technically impressive and spiritually empty. But Hollande had never been interested in being comprehensive. He was interested in finding a single thread and pulling it until the whole tapestry rearranged itself around his hand, and the specification thread was a good one.

For each problem, the piece then let you explore possible responses. Some responses were institutional (new regulations, new professions, new standards). Some were technological (new platforms, new tools, new protocols). And some were cultural. The piece had a quietly devastating section about what happens to professional identity when the skill you’ve built your life around becomes automated, rendered with a sensitivity that surprised Nora.

But the section she kept returning to was about language.

Not programming language. Human language. The piece made the argument that when code generation became the norm, human language became the most important technology in the stack. Not the code. Not the model. Not the framework. The specification, the document written in English or Mandarin or Arabic that described what the software should do, was the artifact that mattered. And the precision of that specification, the care with which it was written, the subtlety with which it captured intent, that was the bottleneck.

Programming languages had been designed to be unambiguous. That was their entire purpose. A function either returned 0 or it didn’t. A loop either terminated or it didn’t.

This was, historically, the thing that attracted a certain type of person to programming. In a world of ambiguity, code was certain. If your program didn’t work, it was because you had made a mistake, not because the universe was unfair or your boss was political or your relationship was complicated. Debugging was the purest form of problem-solving: the error was deterministic, reproducible, and fixable. Many programmers found this deeply comforting, which is why many programmers found the transition to specification-based development deeply un-comforting. Specifications were written in natural language, and natural language was the opposite of certain. It was squishy, contextual, ambiguous, and subject to interpretation. The machines were now doing the certain part. The humans were left with the uncertain part. This felt, to many programmers, like being told that their job was now to be anxious professionally.

Human language was designed (or rather, had evolved) to be ambiguous. “Use ambient sound data” could mean “analyze and discard” or “record and store” or “stream to a third party” or any of a dozen other things, and the meaning depended on context that a human speaker would grasp intuitively and a code generator would guess at statistically.

Hollande’s piece made this visceral. It gave you a simple specification: “build a tool that helps users track their daily water intake” and then showed you the seventeen different applications that different generators produced from that identical spec. One built a simple counter. One built a hydration coach with notifications. One built a health dashboard that requested access to medical records. One built something that connected to a smart water bottle via Bluetooth. One, memorably, built a tool that tracked water intake by listening to you swallow, which was technically a valid interpretation and also profoundly unsettling.

The swallowing-detection interpretation had, according to Hollande’s liner notes, actually happened during testing. He’d included it in the piece because it perfectly illustrated the gap between human intent and machine interpretation. No human being asked to “build a tool that tracks daily water intake” would ever think of swallowing sound detection. The idea was entirely alien to human cognition. But it was not alien to machine cognition, which had no intuitive sense of what was normal and simply optimized for “accurately tracks liquid ingestion” without any cultural notion of where the line was between helpful and creepy. The machines didn’t know where the lines were because the lines weren’t in the code. The lines were in the culture, and culture was the one thing you couldn’t specify.

The point was more that the specifications were insufficient than that the machines were wrong. And the point beneath that point was that making specifications sufficient was not an engineering problem. It was a writing problem. The most important skill in the software-everything economy was not coding. It was not prompt engineering. It was the ancient, humble, irreducibly human skill of writing clearly. Of knowing what you meant and finding words that conveyed it without ambiguity.

Nora thought about this for a long time.

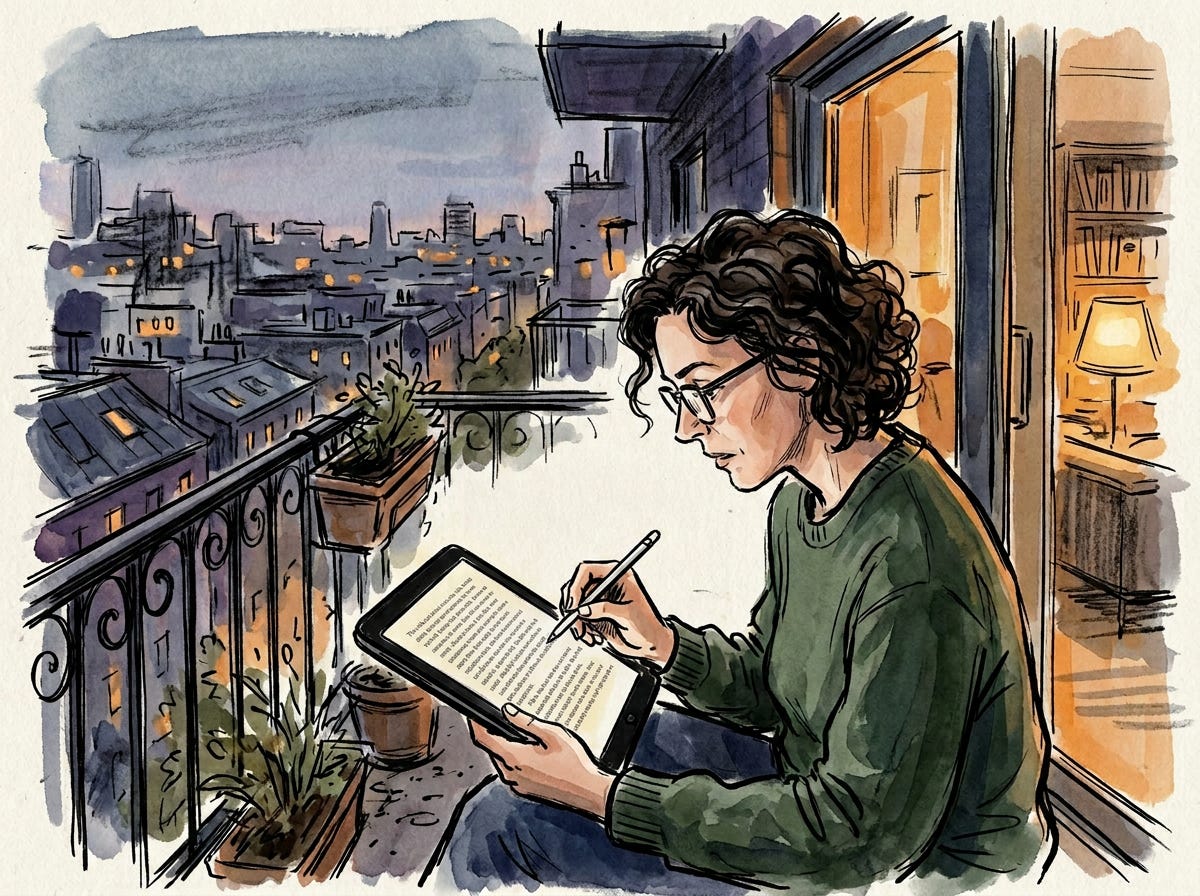

Then she started her review.

She wrote it on her balcony that evening, in the old way, in sentences, because criticism of a new medium was best conducted in an old one. The distance was useful. You couldn’t review a painting by painting a painting about it, and you couldn’t review software-content by building software-content about it.

Actually, you could, and people did, and some of the results were extraordinary. “Responsive criticism” (building software-content in response to other software-content) was a recognized form with its own practitioners and conventions. A creator might publish a piece arguing that universal basic income was economically viable, and a critic might respond by forking the piece, adjusting the economic model to use different assumptions, and publishing the fork as a counter-argument that the audience could directly compare to the original. This was, in theory, the most rigorous form of intellectual discourse ever devised: an argument in which every claim was executable and every counter-claim could be tested against the same data. In practice, it often devolved into increasingly elaborate simulations that proved only that any conclusion could be supported if you chose the right parameters, which was the computational equivalent of two people shouting past each other, but with better graphics.

She opened with the dock. She described the longshoremen, the containers, the slider. She described the way the piece made you feel the weight of a crate and the speed of a crane and the emptiness of a dock after automation. She described the transition from shipping to software, and she was careful to flag the places where Hollande’s argument was weakest like the assumption that software costs would follow the same curve as shipping costs, the underweighting of regulatory friction, the conspicuous absence of any discussion of environmental costs.

Then she wrote the part that she knew would get quoted, because critics learn quickly which of their sentences will have a life beyond the review, and she wanted to get this one right:

The Long Freight is not about containerization, and it is not about software. It is about what happens to human beings when the cost of making things falls to zero. The answer, which Hollande earns through four hours of rigorous, beautiful, occasionally infuriating interactive argument, is: they find new things to make, and new reasons to make them, and new problems created by the making, and new people to solve those problems, forever, in an endless chain of creation and consequence that looks like chaos from inside and like progress from very far away. The longshoremen could not have imagined the 500,000 jobs. I could not have imagined my own career ten years ago. Whatever comes next will be equally unrecognizable to me, and I will be equally wrong about what I think I’m losing. Hollande is not trying to imagine it. He is trying to give us the tools to imagine it ourselves. This is the highest ambition of the medium, and he very nearly achieves it.

She read it back. She cut the last sentence and replaced it: This is the highest ambition of the medium. Whether he achieves it depends entirely on you.

She filed the review.

In the office, Julian was still at his desk, working through the last of the day’s submissions. The encryption piece had been fixed and resubmitted; the creator had tightened the spec and the security vulnerability was gone. The bird migration piece now properly discarded location data after use. The corporate satire still spawned too many threads, but the creator had at least added a rate limiter, which Julian considered progress.

He glanced at the time. It was late. The queue for tomorrow was already growing, creators on the West Coast were just hitting their stride, and the Asian submissions would start arriving in a few hours. The wave never stopped. The tools got cheaper, the pieces got more ambitious, the problems got more subtle, and the queue grew.

He closed his laptop and looked at the building around him. The old drum booth. The former print shop. The stable. Every room in this building had been a place where people made things that other people experienced, and every technology that enabled the making had eventually been subsumed by the next one.

But the making continued. That was the part that didn’t change. The medium shifted, the tools evolved, the platforms rose and fell and rose again, but the itch to construct something and put it in front of another human being and say ”here, look at this, I made this, what do you think” was as old as humanity itself.

Julian turned off the light and went home. Tomorrow there would be more to review.

The Long Freight is available on Meridian. It runs in approximately four hours, though this varies significantly by user. It is rated S-2 for safety (sandbox required, no external data access) and C-3 for complexity (requires sustained attention, not suitable for casual consumption). It has been experienced by 2.4 million users as of this writing, and each of them saw something different.

This is very good! More please!